Most major incident management tool evaluations often focus on features and pricing. The criteria that actually predict whether a tool will reduce your mean time to resolution (MTTR) are more specific, and they depend on who's asking and what your estate looks like today.

The real cost of using the wrong incident management tooling isn't the license fee; it's downtime. Every minute a major incident runs longer than it should, revenue stops, customers notice, service level agreements (SLAs) are breached, and your team burns out trying to compensate for tools that weren't built to execute a response.

So, before you look at feature matrices and vendor demos, ask your business a harder question: what is a 1% reduction in MTTR actually worth to you? What about 10%? What about 28%?

These questions aren’t hypothetical. MTTR reduction is the business case framing that should anchor every tool decision you make in this space. Faster resolution means protected revenue, preserved reputation, reduced regulatory exposure, and an operations team that can scale with confidence rather than just survive the next incident.

Evaluations benefit from including the people who live inside a P1: the executive who needs to understand the blast radius, the Major Incident Manager keeping 300 people coordinated, and the Site Reliability Engineer (SRE) knee-deep in log correlation. Tools that look identical on a feature matrix can feel completely different from each seat. This article outlines the six criteria that predict MTTR impact and specific questions each role involved in resolution needs to ask to help you choose the right tool for your organization and

🎯 The six criteria that predict MTTR impact

Before getting into the role-specific questions, here are the six capability dimensions that separate platforms that genuinely reduce MTTR from those that just give you better visibility into how slowly you're resolving. Use these as your shortlist filter.

- Automated team mobilization: Does it get the right people engaged and executing, or just paged? Alerting is not mobilization.

- Structured task execution: Every task should have an owner, a sequence, a dependency, and a live status. If that doesn’t exist, your team is self-coordinating in chat.

- Real-time stakeholder visibility: Executives should be able to self-serve incident status without interrupting the people resolving it.

- AI agents in the response: Not advisory AI sitting alongside the process, agents invoked by the runbook at the right moment, feeding outputs back into the response alongside all other tasks and teams. (Find out more about how AI agents reduce MTTR).

- Immutable audit trail: Built automatically as the incident runs, not reconstructed from Slack logs after the fact. Non-negotiable for regulated industries.

- Post-incident learning: Every incident should improve the next one. AI linked to resolution outcomes means your response gets measurably faster over time.

👔 What do executives need from incident response tooling?

If you’re a CIO, VP of operations, or other executive involved in major incident management, your role isn't passive. You're accountable for the speed and quality of recovery, for the maturity of your organization's resilience posture, and for the business impact every outage leaves behind. The right tooling should give you the visibility and confidence to drive that, not just report on it, after the fact.

👔 Questions for CIOs, VP Ops, and executives

Q: Can you see the exact status of a major incident, right now, without calling anyone?

Not a stale ticket. Not a summary someone emailed twenty minutes ago. A live view: what's been done, what's blocked, and what the estimated resolution time is. If your tooling requires a human to produce that for you, it's costing resolution time every single incident.

Q: When the board asks what happened, how long does it take to produce the answer?

The audit trail should be a byproduct of execution, built automatically as the incident runs. If your team is reconstructing timelines from Slack logs and email threads after the fact, that's a compliance risk as much as an operational one.

Q: Does automation improve with every incident, or does each one start from scratch?

Static processes decay. The right platform links outcomes back to response patterns, so the organization gets measurably faster over time, not just better documented.

If your current answer to any of these involves someone manually compiling a report or joining a bridge call, you’re absorbing unnecessary risk: operationally and in front of regulators.

Read the CIO and CTO’s guide to reducing MTTR.

🚨What do Major Incident Managers need from incident response tooling?

If you’re a Major Incident Manager, you’re the person in the room when everything is on fire. The tools you have either help you stay in control or add to the chaos. Most add to the chaos.

🚨Questions for Major Incident Managers

Q: How much of your time during a P1 is spent giving status updates instead of managing the response?

Every time an exec joins the bridge to ask for an update, you stop managing the incident. Multiply that across a three-hour outage and the interruption cost is significant. Your tooling should give stakeholders self-serve visibility, so you can stay focused on resolution.

Q: When an incident fires, where are the relevant insights, and how long does it take to surface them?

The context you need might be buried in a ServiceNow ticket, a PagerDuty alert, an AWS health event, or a log your DevOps agent flagged twenty minutes ago. If you're manually correlating signals across four systems in the middle of a P1, that delay is directly increasing your MTTR. Does your platform pull that into a single operational view automatically or do you have to go hunting?

Q: Once you know who needs to act, how do you know they actually jumped on it?

Sending a page or a Slack message isn't mobilization. Mobilization means the right people are engaged, have the right context, have accepted their tasks, and are executing. You can see all of that in real time. If your process relies on chasing people to confirm they've picked something up, that's a tooling gap, not a people problem.

Q: Can any engineer run a high-quality response, or does it depend on who's on call?

If the answer relies on one or two senior engineers who know the system, you have a hero culture problem. The right tooling puts a structured, sequenced runbook in front of every responder, so a 3am P1 doesn't hinge on one person being available.

The MIM questions are where most tools fail hardest. Alerting gets people paged. Ticketing logs the incident. Neither tells you who's doing what, whether they've started, or where the critical path is blocked. That is the orchestration gap, and it is what causes MTTR to go up.

⚙️What do DevOps and SREs need from incident response tooling?

If you’re a DevOps or Site Reliability Engineer, you care about reliability, speed of recovery, and the quality of the tools you're handed to do your job under pressure. You care about visibility into what's happening across the stack and not spending a live P1 doing work that a machine should be doing: repeatedly updating tickets, chasing log outputs, running the same health checks you ran last time. That repetitive, low-value work that consumes engineer time during an incident. That is what's meant by toil, and the right tooling eliminates it.

⚙️Questions for DevOps and SREs

Q: Does your tooling eliminate toil, or just relocate it?

Toil is the repetitive, manual work that doesn't scale: running the same log checks every incident, updating tickets by hand, re-running health checks that could be automated. During a P1, these tasks eat minutes your team doesn't have. The right platform automates them, so your engineers are diagnosing and resolving, not performing admin under pressure.

Q: Does it work with your existing stack, or ask you to rebuild around it?

Your monitoring tools, ITSM, cloud estate, and AI agents already exist. A good execution layer plugs into all of them, surfacing context from each system into a single operational view, without requiring you to rip out what works. The question isn't whether a tool has integrations listed; it's whether the critical data from those integrations can be surfaced in real time and within the right context to actually change how engineers respond.

Every hour an engineer spends on manual coordination during an incident is an hour not spent on resolution, or on the reliability improvements that would prevent the next incident.

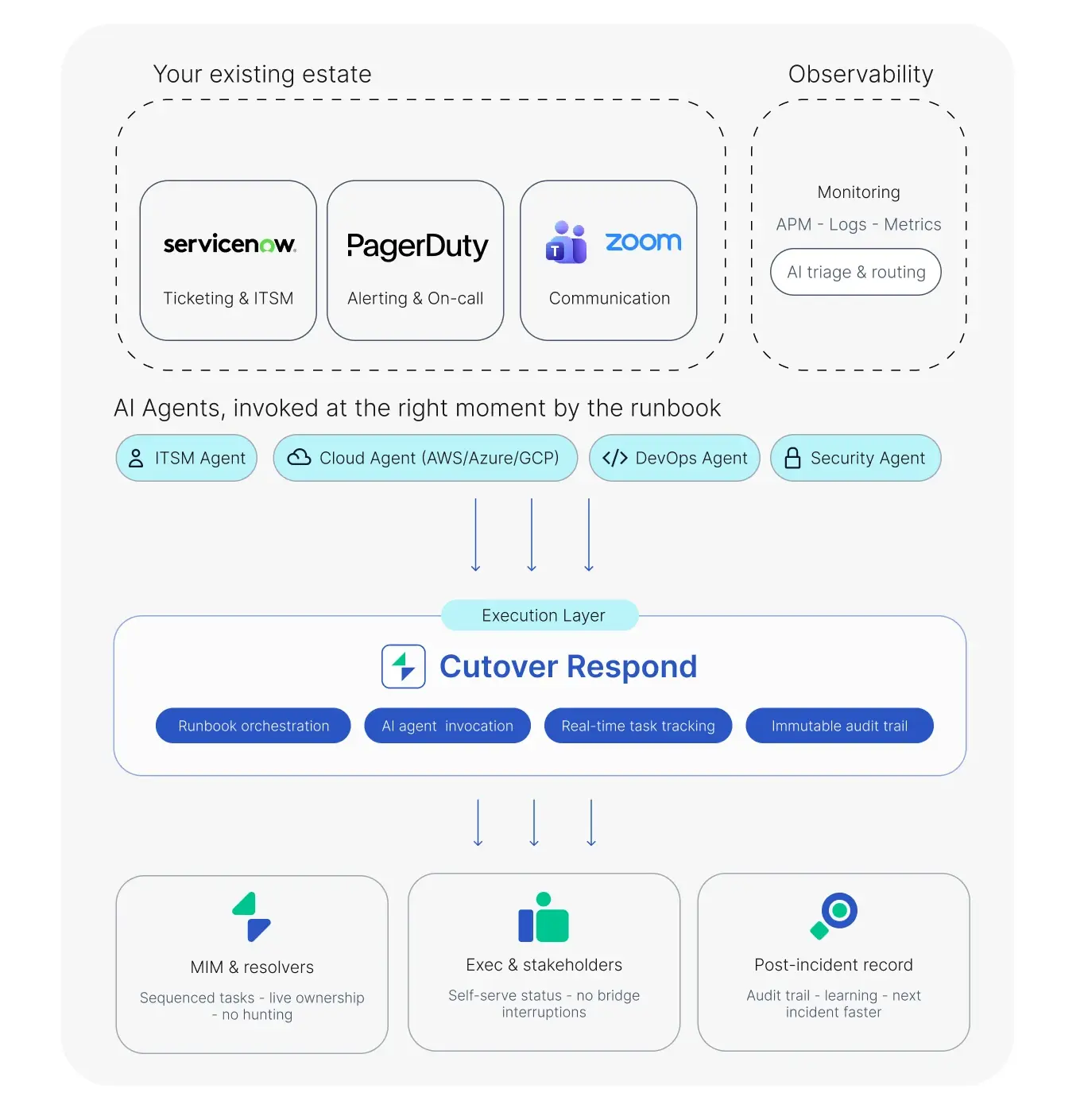

🔌Cutover Respond: The execution layer to reduce MTTR

Cutover Respond doesn't replace your existing tooling; it sits across it as the execution layer, combining automated runbooks with live dashboards, AI agents, and automatically generated audit logs. AI agent outputs feed back into the runbook alongside every other task, team, and signal in the response, providing the visibility and control needed to reduce MTTR.

Here’s how Cutover Respond combines with your existing estate and AI agents to give team members at every level what they need to successfully reduce MTTR:

For a deeper dive into this topic, read What are the best incident management tools for major IT disruptions?